By Lina Kanan

April 13, 2026

Change Detection: Your Language Experience Influences Attention to Speech in Noisy Environments

Have you ever wondered if the language(s) you speak bias your attention to certain sounds in busy real-world environments?

Woman walking on a busy Toronto street. Photo Source: Canva AI.

Imagine walking down the busy streets of downtown Toronto. You might hear a lot of competing sounds around you: music playing from the cafe, dogs barking, cars honking at each other, and strangers having conversations as you walk past them. Despite hearing all these noises, you find yourself turning your head to listen to the conversation of those strangers over the other sounds. Interestingly though, it seems that not all speech is detected equally, but rather depends on the unique language experiences of the individual.

Preference to Speech over other Sounds

New research by Anna Liu, a PhD student working with Dr. Christina Vanden Bosch der Nederlanden at the LAMA lab at the University of Toronto, has revealed that we are biased towards speech in our known languages in busy sound environments. Humans prefer to attend to speech over other sounds in their environment, which makes sense as speech is a socially relevant sound necessary for communication. However, it would be interesting to see whether we are naturally inclined to attend to speech sounds, or if we have the tendency to attend to speech in the language(s) we are familiar with. As Anna Liu put it:“we do attend to speech more than other types of sounds, but… since we are in a multilingual environment, do we attend to all forms of speech equally?”

Searching for the Answer

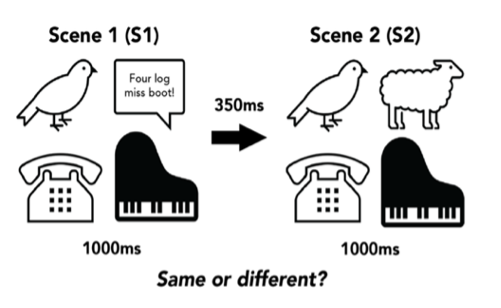

To answer this question, Anna designed a change detection experiment and recruited participants from three language backgrounds: Mandarin- English bilinguals, English monolinguals, and English-Other language bilinguals (individuals who spoke a non-Mandarin language). Auditory change detection tasks test our ability to notice changes in auditory (sound) scenes. The participants heard short auditory scenes that contained 4 different sounds playing simultaneously. These sounds included speech (English or Mandarin), music, environment, and animal sounds. After listening to the first auditory scene another scene was played almost immediately, and the participants had to indicate whether or not the scenes played were the same or different as shown below.

An example of a different auditory scene pair, in which one sound category was changed to another (change was from speech to sheep baa-ing)

Why have two Bilingual Groups?

The purpose of having two languages, one of which is a tone language (Mandarin) was because as Anna says, “it’s really interesting to examine a tone language vs a non-tone language…tone language speakers might be more sensitive to things like pitch”. Changes in pitch (how high or low we perceive the sounds) is one cue that listeners seem to rely on heavily when detecting changes in the sounds around them. By comparing two bilingual groups, English-Mandarin bilinguals, and English-Other bilinguals the researchers could clarify whether it’s the participants’ specific knowledge of a language that enables them to detect a change in speech more accurately or if bilingualism in general gives listeners an advantage.

The Language(s) You Speak Matters

As expected, the participants detected changes in speech more accurately than other sound categories across the three language groups. This demonstrates that regardless of language background, all listeners showed an attentional bias to speech over other environmental sounds. These findings align with what we know about humans attending preferentially to speech over other sounds. They also found as predicated, Mandarin speakers detected changes in English and Mandarin equally, while the other two language groups (English-Other bilinguals and monolinguals) detected changes in English more accurately than Mandarin. This finding shows that listeners’ experiences speaking a particular language bias their attention toward the languages they know well.

Interestingly, however, the English-Mandarin bilinguals were only significantly better than the English-Other bilingual group at detecting Mandarin speech, but not the monolinguals. This was surprising and Anna says, “The Bilingual-Other group having the worst change detection performance was super unexpected… but I think that is something that can be attributed to the sheer diversity of the bilingual experiences people have”. There were 21 languages other than English and Mandarin that were represented in the English-Other group, some of which were more similar to Mandarin as they were also tone languages. However, to reach a concrete conclusion as to why this unexpected result was observed Anna suggests that more studies are needed “to disentangle if it’s bilingualism or tone language experience or maybe something else altogether”.

Why is this Important?

These findings have real world implications as it helps us understand how people’s language experiences influence their attention to speech and whether we are “better able to attend to someone speaking”. Just like in the example of walking downtown, you may hear many conversations around you, but don’t attend to all speech equally. If you are a Mandarin speaker, Mandarin speech is likely more noticeable to you over other unfamiliar languages as suggested by the findings of this study. For this reason, Anna emphasizes that “It’s okay if you don’t attend to all speech in your environment. Maybe just attending to speech that’s relevant or familiar to you, is actually beneficial and not a flaw”.

Future Research

It is important to note that although auditory scenes used in this experiment were combinations of real-world sounds. They are not fully naturalistic as they consisted of “random combinations” of sounds that we might not necessarily hear at the same time in the real world, like a microwave beeping at the same time as a trumpet tune and a sheep baa-ing. To address this concern, Anna Liu has designed a new study in which children and adults will play a fun change detection game. This time the changes in sound “actually occur in their environment” such as in a park where “the dog sound changes into a bird sound”. Through this, the researchers hope to see if the attentional speech bias “extends to real world environments”.

If you are interested to read more on change detection and learn about ongoing research, visit the LAMA lab:https://www.utmlamalab.com/

Liu, A.Y & Christina M. Vanden Bosch der Nederlanden, C.M. Change detection is biased to listeners’ known languages in complex auditory scenes. https://www.utmlamalab.com/